Once trained, these network is combined with a Monte-Carlo Tree Search (MCTS) using the policy to narrow down the search to high probability moves, and using the value in conjunction with a fast rollout policy to evaluate positions in the tree. This neural network takes the board position as input and outputs a vector of move probabilities (policy) and a position evaluation.

AlphaZero evaluates positions using non-linear function approximation based on a deep neural network, rather than the linear function approximation as used in classical chess programs. The algorithm is a more generic version of the AlphaGo Zero algorithm that was first introduced in the domain of Go. Starting from random play, and given no domain knowledge except the game rules, AlphaZero achieved a superhuman level of play in the games of chess and Shogi as well as in Go. The final peer reviewed paper with various clarifications was published almost one year later in the Science magazine under the title A general reinforcement learning algorithm that masters chess, shogi, and Go through self-play.

On Decem, the DeepMind team around David Silver, Thomas Hubert, and Julian Schrittwieser along with former Giraffe author Matthew Lai, reported on their generalized algorithm, combining Deep learning with Monte-Carlo Tree Search (MCTS). But despite how unorthodox the move was, it gave AlphaGo its upper hand which allowed it to eventually take game two.īy being self taught, AlphaGo was able to make a move which was unthinkable for humans, which shows that AI has the capability to find unique solutions to problems which humans would not think to try.Īnd with the release of AlphaZero, who’s general purpose algorithm mastered 3 different games rather than just one, it makes one wonder what else machine learning and AI can be applied to.A chess and Go playing entity by Google DeepMind based on a general reinforcement learning algorithm with the same name. The level of unconventionality of this play in GO would be similar to a chess player sacrificing their queen in order to capture a knight. On move 37 in game two of the best of seven match, AlphaGo made a play which was described to be “unimaginable” to human intuition. The value of Z is measured against the scalars vₜ for the moves taken during the game and placed into a loss function.įigure 4: Go world champion Lee Sedol’s reaction to AlphaGo’s move 37 Finally, a move is chosen which has high value but also high probability.Īt the end of the game, the output is quantized into a variable Z, with a win being 1, draw 0, and lose as -1. The search returns a vector that represents a probability distribution over the possible moves. For each state s, the traversal algorithm chooses moves which have high move probability, low visit count, and high value for continued exploration, until it reaches a terminal gamestate. To decide on a move, the decision tree is traversed with a Monte Carlo search algorithm(see reference 6) with root node holding the current board state sᵣₒₒₜ. f(s) then outputs a vector of move probabilities for each action a, and a scalar v which estimates the outcome z of the game from board position s. Both use a decision tree representation to hold possible future boardstates which could result from the current boardstate, the current boardstate being the root node in the decision tree.įigure 3: IBM’s Chess Grandmaster consultant Joel Benjamin playing against DeepBlueĪlphaZero uses a deep learning neural network (p,v) = f(s)which takes the board state s as its input and has parameters θ. The two programs share technical similarities for their game engine.

AlphaZero is commonly compared to IBM’s Deep Blue, which famously defeated the reigning world chess champion Gary Kasporov in 1996, becoming the first computer system to do so. AlphaZero then learned GO and Shogi and defeated its predecessor AlphaGo in 30 hours, as well as the top Shogi Elmo in only 2 hours.ĪlphaZero and its predecessors are not the first programs to beat humans at our own game.

Starting from the basic rules of chess, after just 4 hours of self learning AlphaZero mastered chess and outperformed the reigning AI champion, Stockfish 9. But this time the program was not designed solely to play GO, but to also play any other two player board game. And then in 2016 AlphaGo beat the reigning world champion Lee Sedol.įinally in 2017, AlphaZero was made as a successor to AlphaGo Zero, an improved version of AlphaGo.

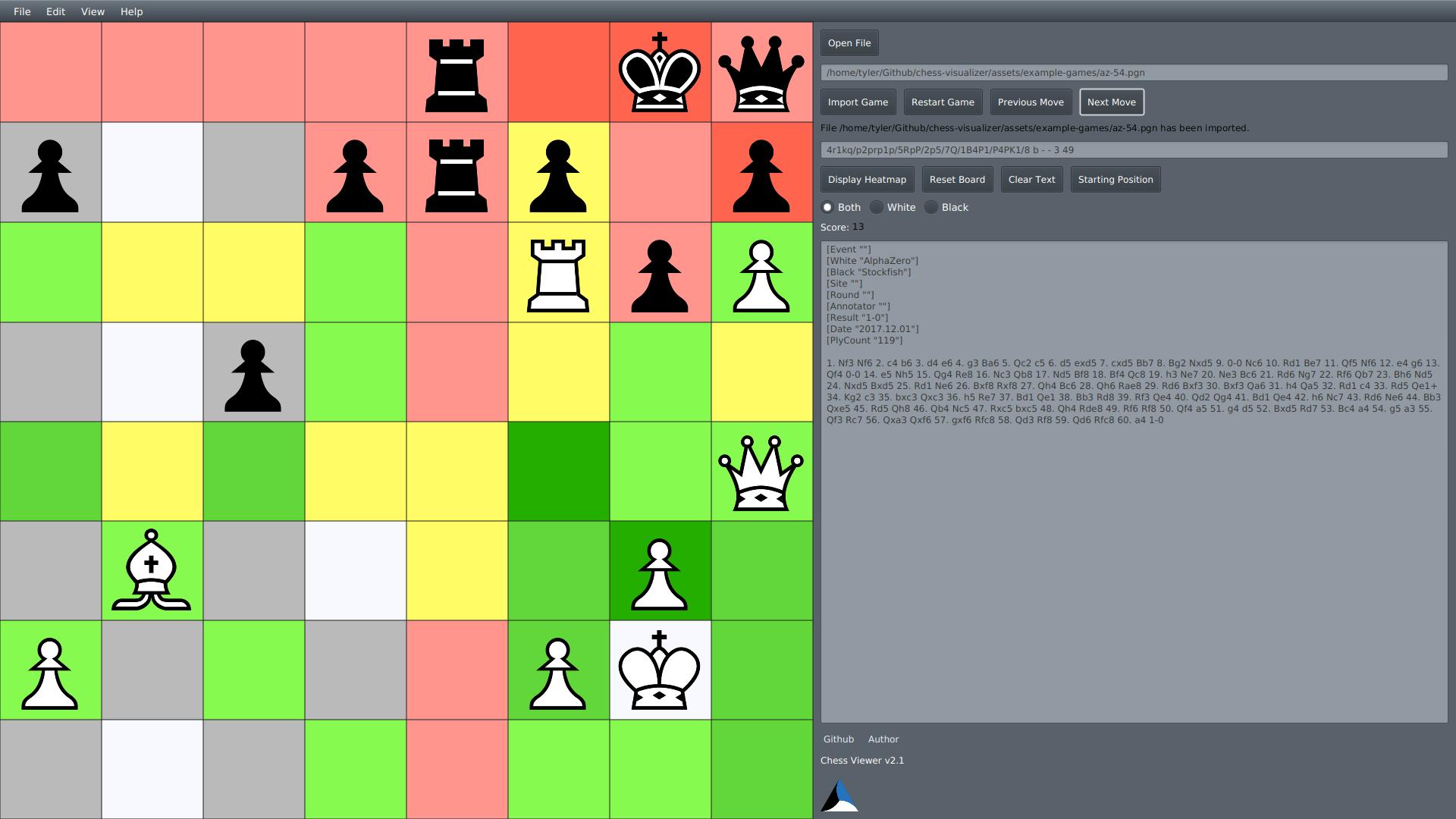

Alphazero pgn chess professional#

Figure 1: Different iterations of DeepMind’s machine learning game enginesĪ year later after its development in 2015, AlphaGo won against European Go champion Fan Hui, thus becoming the first Go program to beat a professional player without handicaps.